On March 17, 2018, The New York Times, alongside The Guardian and The Observer, reported that…

Cambridge Analytica, a data analysis firm that worked on President Trump’s 2016 campaign, and its related company, Strategic Communications Laboratories, pilfered the data of 50 million Facebook users and secretly kept it. This revelation and its implications, that Facebook allowed data from millions of its users to be captured and improperly used to influence the presidential election, ignited a conflagration that threatens to engulf the already tattered reputation of the embattled social media giant.

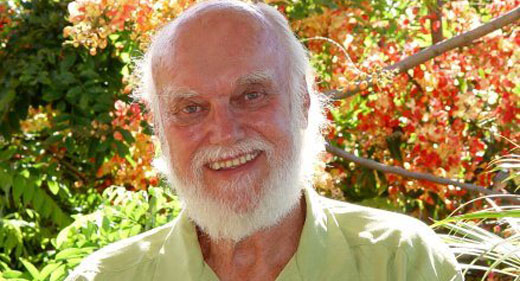

For five days, Mark Zuckerberg, Facebook’s CEO, remained what many called “deafeningly silent,” before finally posting a lengthy response to his personal Facebook page. He then spoke to a small handful of news outlets, including WIRED, offering apologies, conceding mistakes, and, surprisingly, even entertaining regulation for his sprawling company.

From the moment the news about Cambridge broke, the media hydrant gushed out report after report. For those who want a linear representation of the week’s news, below you can find WIRED’s extensive Cambridge Analytica coverage—which dates back nearly two years.

A Lot of People Are Saying Trump’s New Data Team Is Shady

It started in the summer of 2016, when Trump’s team hired Cambridge Analytica, a data analysis firm that had worked with Ben Carson and Ted Cruz during their presidential primary runs. As WIRED senior writer Issie Lapowsky reported at the time, the firm claimed to target voters based upon their psychological profiles, but some critics called “the company’s ‘psychographic’ targeting claims hype at best and snake oil at worst.” A Trump aide told WIRED at the time that the data from Cambridge was “‘one cog in a very large engine’ fueled by information from the Republican National Committee and other vendors.”

Trump’s Big Data Mind Explains How He Knew Trump Could Win

After Trump won the presidential election, in November 2016, Lapowsky reached out to Matt Oczkowski, director of product for Cambridge Analytica, Trump’s data team. As Lapowsky wrote then, “The election upset already has inspired headlines about data being dead. Trump did, after all, reject the need for data, only to hire Cambridge Analytica during the summer after clinching the nomination. But Oczkowski believes such a characterization is as much a misreading of the situation as the polls themselves. ‘Data is not dead,’ he says, before repeating the old political adage that data doesn’t win campaigns, it only win margins. ‘Data’s alive and kicking. It’s just how you use it and how you buck normal political trends to understand your data.’”

What Did Cambridge Analytica Really Do for Trump’s Campaign?

Nearly a year later, in October 2017, news broke that Cambridge Analytica’s CEO, Alexander Nix, had approached Wikileaks founder Julian Assange in 2016 to exploit Hillary Clinton’s private emails, a revelation that raised concerns about Cambridge’s role in Trump’s 2016 campaign. People who worked with Trump’s campaign quickly moved to downplay Cambridge’s role, saying that the Republican National Campaign was the primary source of voter data, and “Any claims that voter data from any other source played a key role in the victory are false.” This prompted a lot of questions about who did what when.

Cambridge Analytica Took 50M Facebook Users’ Data—And Both Companies Owe Answers

Which brings us up to date. On March 17, 2018, The New York Times, along with The Guardian and The Observer, reported that Cambridge Analytica and its related company, SCL, pilfered data on 50 million Facebook users and secretly kept it. Just hours before the report was set to publish, Facebook suspended both Cambridge and SCL while it investigates whether both companies retained Facebook user data that had been provided by third-party researcher Aleksandr Kogan of the company Global Science Research, a violation of Facebook’s terms. Facebook says it knew about the breach, but had received legally binding guarantees from the company that all of the data was deleted. “We are moving aggressively to determine the accuracy of these claims. If true, this is another unacceptable violation of trust and the commitments they made,” Paul Grewal, Facebook’s vice president and general counsel, wrote in a blog post.

The Noisy Fallacies of Psychographic Targeting

Of course, all of this prompted concerns about what “psychographic targeting” really means. WIRED contributor Antonio Garcia Martinez, a former Facebook employee who worked on the monetization team, explored Cambridge Analytica’s ad effectiveness claims, explaining that its efforts probably didn’t work, but Facebook should be embarrassed anyway.

Facebook in the Age of the Big Tech Whistleblower

When the Cambridge news broke, much of it felt . . . familiar. But as WIRED senior writer Jessie Hempel notes, “In a flash, [whistleblower Christopher] Wylie’s story made the idea of misused big data concrete—and urgent.”

Cambridge Analytica Execs Caught Discussing Extortion and Fake News

Two days later, on March 19, 2018, Britain’s Channel 4 News released a series of undercover videos filmed over the the course of the past year that showed executives at Cambridge Analytica appearing to say they could extort politicians, send women to entrap them, and help proliferate propaganda to help their clients.

Facebook Owes You More Than This

WIRED news editor Brian Barrett made the argument “that Facebook has been a poor steward of your data, asking more and more of you without giving you more in return—and often not even bothering to let you know. It has repeatedly failed to keep up its side of the deal, and expressed precious little interest in making good. …Facebook users need to ask themselves very seriously exactly what kind of bargain they’ve struck—and how long they’re willing to put up with Facebook changing the terms.”

A Hurricane Flattens Facebook

Then on Monday, March 20, WIRED editor-in-chief Nicholas Thompson and contributing editor Fred Vogelstein wrote a comprehensive account of the previous three days, laying out the situation inside Facebook as the scandal unfurled. “As the storm built over the weekend, Facebook’s executives, including Mark Zuckerberg and Sheryl Sandberg, strategized and argued late into the night. They knew that the public was hammering them, but they also believed that the fault lay much more with Cambridge Analytica than with them. Still, there were four main questions that consumed them. How could they tighten up the system to make sure this didn’t happen again? What should they do about all the calls for Zuckerberg to testify? Should they sue Cambridge Analytica? And what could they do about psychologist Joseph Chancellor, who had helped found Kogan’s firm and who now worked, of all places, at Facebook?” Ultimately, Thompson and Vogelstein’s reporting led them to ask, “Why didn’t Facebook conduct an audit—a decision that may go down as Facebook’s most crucial mistake?”

The Complete Guide to Facebook Privacy

The “breach” freaked out thousands of people, and soon #deletefacebook was trending on Twitter. WIRED published its own complete guide to locking down your Facebook privacy (or delete it all together).

Cambridge Analytica Suspends CEO Alexander Nix Amid Scandals

The next body blow came to Cambridge Analytica, with the company suspending its CEO on March 20, 2018.

Mark Zuckerberg Speaks Out on Cambridge Analytica Scandal

On Wednesday, March 21, five days after the news first broke, Zuckerberg posted a lengthy response on his personal Facebook page, apologizing for the company’s failure to protect its users’ data and announcing changes to the platform intended to do just that. He also said the company plans to audit apps that were able to access large amounts of information, and that Facebook will ban apps that don’t agree to an audit.

The Irreversible Damage of Mark Zuckerberg’s Silence

As WIRED’s Jessi Hempel wrote after Zuckerberg posted his response, the question quickly became, “Can Mark Zuckerberg be trusted? This is an existential question for the company. Because there’s one great challenge with empowering trust in people over institutions: People are fickle. They change. They disappoint. Sometimes, in moments of crisis, they ghost us. Individuals are easily taken down by a moment that shifts our perception of their character. Which leaves Facebook’s leader with an unenviable task: If Zuckerberg wants us to believe now that his company is not vulnerable, he must shore up trust in himself as an individual. It’s his only way forward.”

Mark Zuckerberg Talks to WIRED About Facebook’s Privacy Problem

Then, on Wednesday afternoon, Zuckerberg granted interviews to a small handful of media outlets, including WIRED. When editor-in-chief Nick Thompson spoke to Zuckerberg, he asked him about the recent crisis, the mistakes Facebook made, and how the company might be regulated.

What Would Regulating Facebook Look Like?

Zuckerberg was surprisingly open to discussing regulation for Facebook. As he noted in his interview with WIRED, guidelines rather than explicit regulations might be a model. He pointed to Germany, where hate speech laws require Facebook and other companies to remove offending posts within 24 hours. “The German model—you have to handle hate speech in this way—in some ways that’s actually backfired,” Zuckerberg says. “Because now we are handling hate speech in Germany in a specific way, for Germany, and our processes for the rest of the world have far surpassed our ability to handle that. But we’re still doing it in Germany the way that it’s mandated that we do it there. So I think guidelines are probably going to be a lot better.”

Mark Zuckerberg Speaks, But Is He Listening?

After the interview came the parsing of Zuckerberg’s comments, including one analysis from WIRED’s own Jason Tanz. “[Zuckerberg] neglects the fact that Facebook itself is the source of much of that change. The debates around fake news and hate speech did not happen to befall Facebook; we are having them in part because of Facebook. Zuckerberg often paints his company in this light, as fundamentally reflecting its users’ and society’s behavior rather than shaping it. Fixing fake news is a ‘hard problem’—ignoring Facebook’s role in creating the problem in the first place. The social norms around privacy are ‘just something that’s evolved over time,’ a stance that elides his company’s interest in nudging that evolution along.”

Key Takeaways From Mark Zuckerberg’s Facebook Media Blitz

Issie Lapowsky also mined Zuckerberg’s handful of interviews for news nuggets, finding four key takeaways. 1) Macedonian fake news artists are still at it; 2) Zuckerberg may support some regulation; 3) Zuckerberg would (maybe) testify to congress; and 4) Facebook’s fixes won’t come cheap.

Facebook’s New Data Restrictions Will Handcuff Even Honest Researchers

And what about the researchers who use Facebook data for their studies? WIRED science writer Robbie Gonzalez took a look at the impact the social media company’s new rules could have on the people who want to use that information for good purposes.

Inside the Two Years That Shook Facebook—and the World

If you’d like more insight into the company, a must-read is Nicholas Thompson and Fred Vogelsteins’ March cover story on the past two years at Facebook, and how, during that time, a host of circumstances not only defined the platform, but shaped our world.